Welcome to the second page of a handbook on self-hosting. Begin here.

Topics covered on this page

- How a reverse proxy works and why you need one

- Creating and understanding docker-compose files

- Fleshing out your docker-compose file

- Hitting the "up -d" button on your self-hosted stack

[cta_inline]

How a reverse proxy works and why you need one

A reverse proxy is a web server that accepts incoming traffic, analyzes it, and directs it to the appropriate backend service/server. Reverse proxies are often used as load balancers or caching servers, but for the sake of this handbook, we're mostly interested in how a reverse proxy can accept traffic from multiple domains, all directed toward the same VPS, and deliver the appropriate content in turn.

Let's look at this example diagram:

ssdnodes.com ----| |-- LAMP stack + PHP files

| reverse proxy |

x.ssdnodes.com --|--- @ ---|-- Wordpress blog

| 123.456.78.90 |

y.ssdnodes.com --| |-- Node.js web appIn this case, we have three domains—ssdnodes.com, x.ssdnodes.com, and y.ssdnodes.com—that are all pointed toward a VPS at the IP address 123.456.78.90. We also have three backend services—a LAMP stack, a Wordpress blog, and a Node.js web app—that we want to serve.

When someone types one of those three domains into their browser's navigation bar, they send a request to the VPS via DNS (remember DNS from the previous page?). The reverse proxy receives this request, analyzes it, and recognizes that the request is looking for ssdnodes.com. The reverse proxy then sends a request to the LAMP stack, which returns an HTML page. Finally, the reverse proxy "forwards" that HTML page (proxies it, actually, hence the name) to the user's browser.

When they type x.ssdnodes.com into their browser, the reverse proxy returns the Wordpress blog, and when the type x.ssdnodes.com into their browser, they get the Node.js app in return.

In this way, a reverse proxy allows you to host multiple websites or web apps from a single VPS.

I consider a reverse proxy necessary to self-hosting because it's inconvenient enough to remember the IP address of your VPS, much less the various ports on which each of your services will be running. Also, the reverse proxy will request and serve the Let's Encrypt SSL certificate that will allow you to have HTTPS-enabled websites and apps.

Why is SSL important? Expand

example.com). A Let's Encrypt certificate allows you to encrypt the traffic entering and leaving the self-hosting stack on your VPS.

No matter what you'll ultimately be hosting on your VPS via this handbook, SSL is critical. If you're going to host a website or blog, for example, Google will severely dock your SEO scores without SSL enabled. If you have any personal information stored on your VPS, SSL will help prevent anyone from reading that information. Even if you're only self-hosting a private Git server like Gitea, SSL ensures full encryption between your server and your browser.

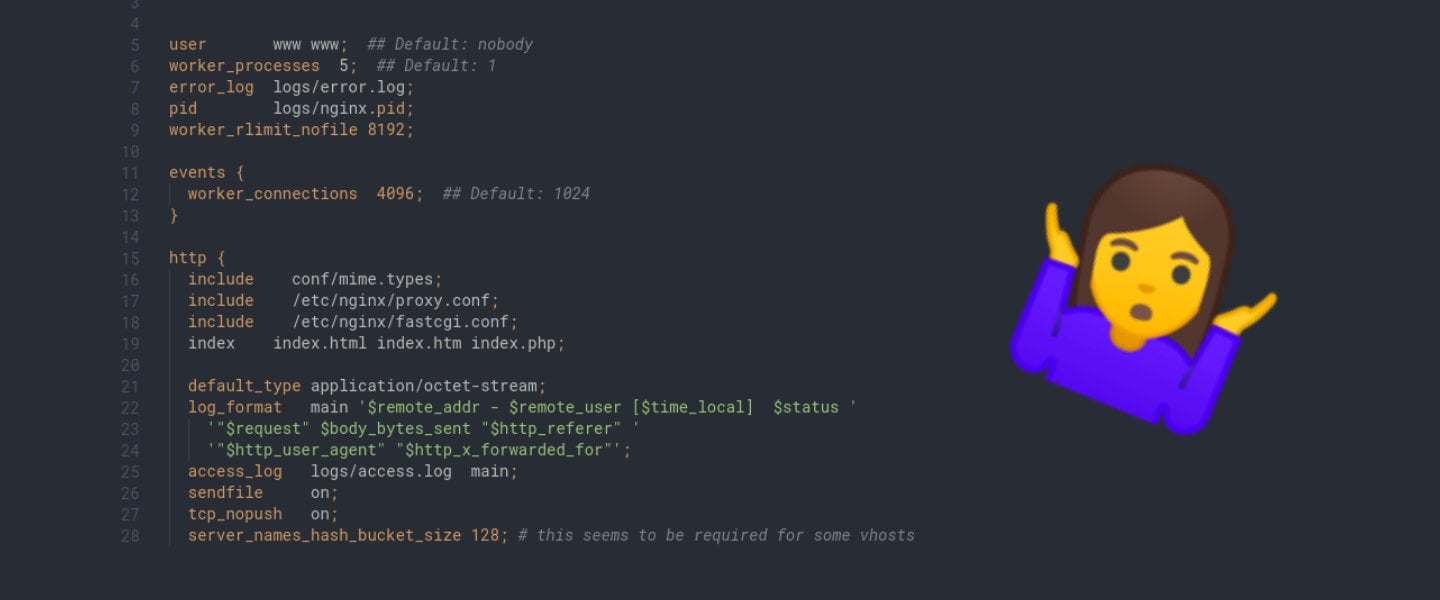

Creating and understanding docker-compose files

Now that you have some context for what we're trying to build with this self-hosting stack, it's time to take a look at the primary tool we're going to use moving forward: docker-compose.

You installed this program on the previous page. docker-compose is a "wrapper" around the docker command-line program itself, and uses configuration files called docker-compose.yml to launch multiple Docker containers instead of typing out long commands with docker.

Log into your VPS if you're not already, create a new proxy directory in your home directory, and cd into it.

$ mkdir -p ~/proxy

$ cd ~/proxyCreate a new file using the editor of your choice. I use nano for these kinds of tasks, but you may prefer to use vim or emacs instead. Just replace nano with your editor of choice from here on out.

$ nano docker-compose.ymlLet's start with building out the "skeleton" of a docker-compose.yml file, which will then allow us to talk about how each component works, and the syntax behind it. Inside the new file, type in the

by subscribing to our newsletter.